Quantitative researchers are often interested in being able to make generalizations about groups larger than their study samples. While there are certainly instances when quantitative researchers rely on nonprobability samples (e.g., when doing exploratory or evaluation research), quantitative researchers tend to rely on probability sampling techniques. The goals and techniques associated with probability samples differ from those of nonprobability samples. We’ll explore those unique goals and techniques in this section.

Unlike nonprobability sampling, probability samplingSampling techniques for which a person’s likelihood of being selected for membership in the sample is known. refers to sampling techniques for which a person’s (or event’s) likelihood of being selected for membership in the sample is known. You might ask yourself why we should care about a study element’s likelihood of being selected for membership in a researcher’s sample. The reason is that, in most cases, researchers who use probability sampling techniques are aiming to identify a representative sampleA sample that resembles the population from which it was drawn in all the ways that are important for the research being conducted. from which to collect data. A representative sample is one that resembles the population from which it was drawn in all the ways that are important for the research being conducted. If, for example, you wish to be able to say something about differences between men and women at the end of your study, you better make sure that your sample doesn’t contain only women. That’s a bit of an oversimplification, but the point with representativeness is that if your population varies in some way that is important to your study, your sample should contain the same sorts of variation.

Obtaining a representative sample is important in probability sampling because a key goal of studies that rely on probability samples is generalizabilityThe idea that a study’s results will tell us something about a group larger than the sample from which the findings were generated.. In fact, generalizability is perhaps the key feature that distinguishes probability samples from nonprobability samples. Generalizability refers to the idea that a study’s results will tell us something about a group larger than the sample from which the findings were generated. In order to achieve generalizability, a core principle of probability sampling is that all elements in the researcher’s target population have an equal chance of being selected for inclusion in the study. In research, this is the principle of random selectionThe principle that all elements in a researcher’s target population have an equal chance of being selected for inclusion in the study.. Random selection is a mathematical process that we won’t go into too much depth about here, but if you have taken or plan to take a statistics course, you’ll learn more about it there. The important thing to remember about random selection here is that, as previously noted, it is a core principal of probability sampling. If a researcher uses random selection techniques to draw a sample, he or she will be able to estimate how closely the sample represents the larger population from which it was drawn by estimating the sampling error. Sampling errorThe extent to which a sample represents its population on a particular parameter. is a statistical calculation of the difference between results from a sample and the actual parametersThe actual characteristics of a population on any given variable; determined by measuring all elements in a population (as opposed to measuring elements from a sample). of a population.

There are a variety of probability samples that researchers may use. These include simple random samples, systematic samples, stratified samples, and cluster samples.

Simple random samplesThe most basic type of probability sample; a researcher begins with a list of every member of his or her population of interest, numbers each element sequentially, and then randomly selects the elements from which he or she will collect data. are the most basic type of probability sample, but their use is not particularly common. Part of the reason for this may be the work involved in generating a simple random sample. To draw a simple random sample, a researcher starts with a list of every single member, or element, of his or her population of interest. This list is sometimes referred to as a sampling frameA list of all elements in a population.. Once that list has been created, the researcher numbers each element sequentially and then randomly selects the elements from which he or she will collect data. To randomly select elements, researchers use a table of numbers that have been generated randomly. There are several possible sources for obtaining a random number table. Some statistics and research methods textbooks offer such tables as appendices to the text. Perhaps a more accessible source is one of the many free random number generators available on the Internet. A good online source is the website Stat Trek, which contains a random number generator that you can use to create a random number table of whatever size you might need (http://stattrek.com/Tables/Random.aspx). Randomizer.org also offers a useful random number generator (http://randomizer.org).

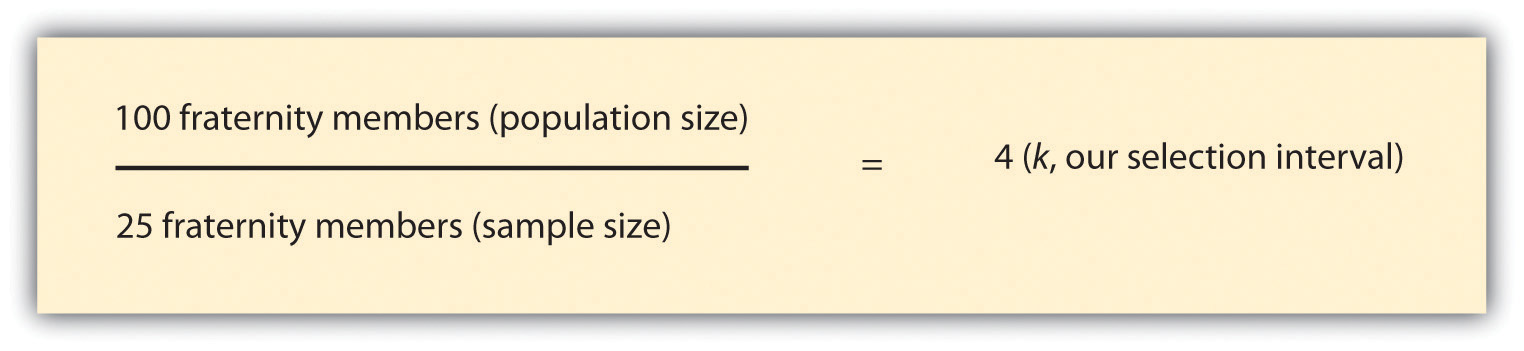

As you might have guessed, drawing a simple random sample can be quite tedious. Systematic samplingA researcher divides a study population into relevant subgroups then draws a sample from each subgroup. techniques are somewhat less tedious but offer the benefits of a random sample. As with simple random samples, you must be able to produce a list of every one of your population elements. Once you’ve done that, to draw a systematic sample you’d simply select every kth element on your list. But what is k, and where on the list of population elements does one begin the selection process? k is your selection intervalThe distance between elements selected for inclusion in a study. or the distance between the elements you select for inclusion in your study. To begin the selection process, you’ll need to figure out how many elements you wish to include in your sample. Let’s say you want to interview 25 fraternity members on your campus, and there are 100 men on campus who are members of fraternities. In this case, your selection interval, or k, is 4. To arrive at 4, simply divide the total number of population elements by your desired sample size. This process is represented in Figure 7.5 "Formula for Determining Selection Interval for Systematic Sample".

Figure 7.5 Formula for Determining Selection Interval for Systematic Sample

To determine where on your list of population elements to begin selecting the names of the 25 men you will interview, select a random number between 1 and k, and begin there. If we randomly select 3 as our starting point, we’d begin by selecting the third fraternity member on the list and then select every fourth member from there. This might be easier to understand if you can see it visually. Table 7.2 "Systematic Sample of 25 Fraternity Members" lists the names of our hypothetical 100 fraternity members on campus. You’ll see that the third name on the list has been selected for inclusion in our hypothetical study, as has every fourth name after that. A total of 25 names have been selected.

Table 7.2 Systematic Sample of 25 Fraternity Members

| Number | Name | Include in study? | Number | Name | Include in study? | |

|---|---|---|---|---|---|---|

| 1 | Jacob | 51 | Blake | Yes | ||

| 2 | Ethan | 52 | Oliver | |||

| 3 | Michael | Yes | 53 | Cole | ||

| 4 | Jayden | 54 | Carlos | |||

| 5 | William | 55 | Jaden | Yes | ||

| 6 | Alexander | 56 | Jesus | |||

| 7 | Noah | Yes | 57 | Alex | ||

| 8 | Daniel | 58 | Aidan | |||

| 9 | Aiden | 59 | Eric | Yes | ||

| 10 | Anthony | 60 | Hayden | |||

| 11 | Joshua | Yes | 61 | Brian | ||

| 12 | Mason | 62 | Max | |||

| 13 | Christopher | 63 | Jaxon | Yes | ||

| 14 | Andrew | 64 | Brian |

| Number | Name | Include in study? | Number | Name | Include in study? | |

|---|---|---|---|---|---|---|

| 15 | David | Yes | 65 | Matthew | ||

| 16 | Logan | 66 | Elijah | |||

| 17 | James | 67 | Joseph | Yes | ||

| 18 | Gabriel | 68 | Benjamin | |||

| 19 | Ryan | Yes | 69 | Samuel | ||

| 20 | Jackson | 70 | John | |||

| 21 | Nathan | 71 | Jonathan | Yes | ||

| 22 | Christian | 72 | Liam | |||

| 23 | Dylan | Yes | 73 | Landon | ||

| 24 | Caleb | 74 | Tyler | |||

| 25 | Lucas | 75 | Evan | Yes | ||

| 26 | Gavin | 76 | Nicholas | |||

| 27 | Isaac | Yes | 77 | Braden | ||

| 28 | Luke | 78 | Angel | |||

| 29 | Brandon | 79 | Jack | Yes | ||

| 30 | Isaiah | 80 | Jordan | |||

| 31 | Owen | Yes | 81 | Carter | ||

| 32 | Conner | 82 | Justin | |||

| 33 | Jose | 83 | Jeremiah | Yes | ||

| 34 | Julian | 84 | Robert | |||

| 35 | Aaron | Yes | 85 | Adrian | ||

| 36 | Wyatt | 86 | Kevin | |||

| 37 | Hunter | 87 | Cameron | Yes | ||

| 38 | Zachary | 88 | Thomas | |||

| 39 | Charles | Yes | 89 | Austin | ||

| 40 | Eli | 90 | Chase | |||

| 41 | Henry | 91 | Sebastian | Yes | ||

| 42 | Jason | 92 | Levi | |||

| 43 | Xavier | Yes | 93 | Ian | ||

| 44 | Colton | 94 | Dominic | |||

| 45 | Juan | 95 | Cooper | Yes | ||

| 46 | Josiah | 96 | Luis | |||

| 47 | Ayden | Yes | 97 | Carson | ||

| 48 | Adam | 98 | Nathaniel | |||

| 49 | Brody | 99 | Tristan | Yes | ||

| 50 | Diego | 100 | Parker | |||

| Note: In case you’re wondering how I came up with 100 unique names for this table, I’ll let you in on a little secret: lists of popular baby names can be great resources for researchers. I used the list of top 100 names for boys based on Social Security Administration statistics for this table. I often use baby name lists to come up with pseudonyms for field research subjects and interview participants. See Family Education. (n.d.). Name lab. Retrieved from http://baby-names.familyeducation.com/popular-names/boys. | ||||||

There is one clear instance in which systematic sampling should not be employed. If your sampling frame has any pattern to it, you could inadvertently introduce bias into your sample by using a systemic sampling strategy. This is sometimes referred to as the problem of periodicityThe tendency for a pattern to occur at regular intervals.. Periodicity refers to the tendency for a pattern to occur at regular intervals. Let’s say, for example, that you wanted to observe how people use the outdoor public spaces on your campus. Perhaps you need to have your observations completed within 28 days and you wish to conduct four observations on randomly chosen days. Table 7.3 "Systematic Sample of Observation Days" shows a list of the population elements for this example. To determine which days we’ll conduct our observations, we’ll need to determine our selection interval. As you’ll recall from the preceding paragraphs, to do so we must divide our population size, in this case 28 days, by our desired sample size, in this case 4 days. This formula leads us to a selection interval of 7. If we randomly select 2 as our starting point and select every seventh day after that, we’ll wind up with a total of 4 days on which to conduct our observations. You’ll see how that works out in the following table.

Table 7.3 Systematic Sample of Observation Days

| Number | Day | Include in study? | Number | Day | Include in study? | |

|---|---|---|---|---|---|---|

| 1 | Monday | 15 | Monday | |||

| 2 | Tuesday | Yes | 16 | Tuesday | Yes | |

| 3 | Wednesday | 17 | Wednesday | |||

| 4 | Thursday | 18 | Thursday | |||

| 5 | Friday | 19 | Friday | |||

| 6 | Saturday | 20 | Saturday | |||

| 7 | Sunday | 21 | Sunday | |||

| 8 | Monday | 22 | Monday | |||

| 9 | Tuesday | Yes | 23 | Tuesday | Yes | |

| 10 | Wednesday | 24 | Wednesday | |||

| 11 | Thursday | 25 | Thursday | |||

| 12 | Friday | 26 | Friday | |||

| 13 | Saturday | 27 | Saturday | |||

| 14 | Sunday | 28 | Sunday |

Do you notice any problems with our selection of observation days? Apparently we’ll only be observing on Tuesdays. As you have probably figured out, that isn’t such a good plan if we really wish to understand how public spaces on campus are used. My guess is that weekend use probably differs from weekday use, and that use may even vary during the week, just as class schedules do. In cases such as this, where the sampling frame is cyclical, it would be better to use a stratified sampling techniqueA researcher divides the study population into relevant subgroups then draws a sample from within each subgroup.. In stratified sampling, a researcher will divide the study population into relevant subgroups and then draw a sample from each subgroup. In this example, we might wish to first divide our sampling frame into two lists: weekend days and weekdays. Once we have our two lists, we can then apply either simple random or systematic sampling techniques to each subgroup.

Stratified sampling is a good technique to use when, as in our example, a subgroup of interest makes up a relatively small proportion of the overall sample. In our example of a study of use of public space on campus, we want to be sure to include weekdays and weekends in our sample, but because weekends make up less than a third of an entire week, there’s a chance that a simple random or systematic strategy would not yield sufficient weekend observation days. As you might imagine, stratified sampling is even more useful in cases where a subgroup makes up an even smaller proportion of the study population, say, for example, if we want to be sure to include both men’s and women’s perspectives in a study, but men make up only a small percentage of the population. There’s a chance simple random or systematic sampling strategy might not yield any male participants, but by using stratified sampling, we could ensure that our sample contained the proportion of men that is reflective of the larger population.

Up to this point in our discussion of probability samples, we’ve assumed that researchers will be able to access a list of population elements in order to create a sampling frame. This, as you might imagine, is not always the case. Let’s say, for example, that you wish to conduct a study of hairstyle preferences across the United States. Just imagine trying to create a list of every single person with (and without) hair in the country. Basically, we’re talking about a list of every person in the country. Even if you could find a way to generate such a list, attempting to do so might not be the most practical use of your time or resources. When this is the case, researchers turn to cluster sampling. Cluster samplingA researcher begins by sampling groups of population elements and then selects elements from within those groups. occurs when a researcher begins by sampling groups (or clusters) of population elements and then selects elements from within those groups.

Let’s take a look at a couple more examples. Perhaps you are interested in the workplace experiences of public librarians. Chances are good that obtaining a list of all librarians that work for public libraries would be rather difficult. But I’ll bet you could come up with a list of all public libraries without too much hassle. Thus you could draw a random sample of libraries (your cluster) and then draw another random sample of elements (in this case, librarians) from within the libraries you initially selected. Cluster sampling works in stages. In this example, we sampled in two stages. As you might have guessed, sampling in multiple stages does introduce the possibility of greater error (each stage is subject to its own sampling error), but it is nevertheless a highly efficient method.

Jessica Holt and Wayne Gillespie (2008)Holt, J. L., & Gillespie, W. (2008). Intergenerational transmission of violence, threatened egoism, and reciprocity: A test of multiple pychosocial factors affecting intimate partner violence. American Journal of Criminal Justice, 33, 252–266. used cluster sampling in their study of students’ experiences with violence in intimate relationships. Specifically, the researchers randomly selected 14 classes on their campus and then drew a random subsample of students from those classes. But you probably know from your experience with college classes that not all classes are the same size. So if Holt and Gillespie had simply randomly selected 14 classes and then selected the same number of students from each class to complete their survey, then students in the smaller of those classes would have had a greater chance of being selected for the study than students in the larger classes. Keep in mind with random sampling the goal is to make sure that each element has the same chance of being selected. When clusters are of different sizes, as in the example of sampling college classes, researchers often use a method called probability proportionate to sizeA cluster sampling technique in which each cluster is given a chance of selection based on its size. (PPS). This means that they take into account that their clusters are of different sizes. They do this by giving clusters different chances of being selected based on their size so that each element within those clusters winds up having an equal chance of being selected.

Table 7.4 Types of Probability Samples

| Sample type | Description |

|---|---|

| Simple random | Researcher randomly selects elements from sampling frame. |

| Systematic | Researcher selects every kth element from sampling frame. |

| Stratified | Researcher creates subgroups then randomly selects elements from each subgroup. |

| Cluster | Researcher randomly selects clusters then randomly selects elements from selected clusters. |